This is a follow-up to this post, were attempts to find a line-free set in with at least 150 elements failed, and where we’ve agreed that programming the search would be better.

Thinking about this I’ve come up with the following strategy (let’s work with but the same can de done with

), I haven’t tested it yet I’m just spelling it out:

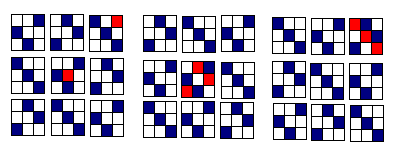

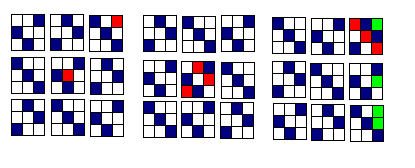

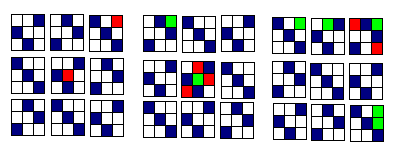

1) start with the blank lattice:

2) then at each lattice site (i.e word ) attach two things: the list of lines it belongs to, and the total number of such lines (a kind of weight, let’s call it

)

for example for the lattice site 11111 we have all the following lines:

with one wildcard 1111*, 111*1, 11*11, 1*111, *1111; with two wildcards 111**, 11*1*, 1*11*, *111*, 11**1, 1*1*1, *11*1, 1**11, *1*11, **111; and so on…

3) then order the lattice sites according to their weight from high to low, and remove sites starting from those with highest weight, until the remaining set is line-free.

That is, my idea here is akin to Fourier analysis: do the big features first (here: high intersections, a kind of backbone) then do the fine details (the lines with few intersections, the flesh). Of course for this to work we have to hope that there are “enough” sites with high to moderate weight, so that we can remove as few sites as possible, and that the procedure is “monotone” otherwise all kinds of local minima issues may arise.

I’m going to try out the idea on first to see if we do recover what we already know, and then move on to

.

Note in particular that if this idea is correct: the 11..111 site should always be removed since it has the heighest weight, but also that to find the biggest line-free sets for a given one should not re-use the line free sets at

but instead recompute the weights and do the search accordingly.

I’ll be offline a few hours and back this afternoon with some code hopefully.